HavocControl

Command and control for collaborative autonomy: designing the interface that put a fleet of autonomous surface vessels in the hands of a single operator.

defense-tech · command-and-control · hmi · 2024–2025

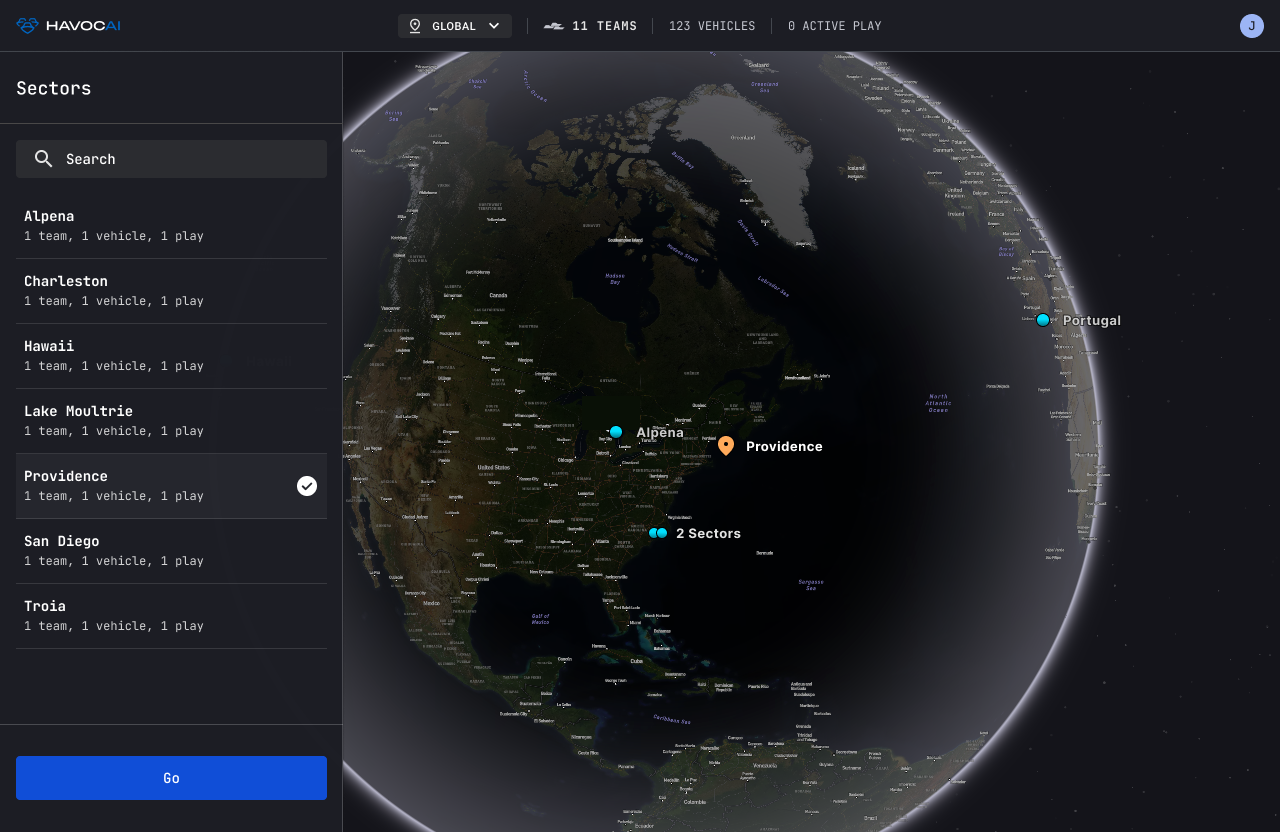

In early 2024, a defense-tech startup asked us to build a best-in-class command interface for fleets of autonomous surface vessels, in just a few months, for a live demonstration to the Navy and DIU. The vessels operated as a cohesive team: onboard AI, emergent behaviors, real-time decisions without constant human input. The interface had to translate all of that into something a field operator could see, understand, and trust, under pressure, in the field, on an iPad Mini.

The Ask

The client was developing collaborative autonomy: multiple autonomous surface vessels operating as a cohesive fleet, each running onboard AI with emergent behaviors, making real-time decisions without constant human input. The vessels could drop cargo in contested waters, track unknown ships, assess affiliation on the fly. This was not a simulation. This was hardware that would perform live, in front of the people who fund defense technology, at an event called Silent Swarm.

"Can you help us build a best-in-class interface for commanding fleets of autonomous surface vessels, in just a few months, so we can demo it live for the Navy and DIU at Silent Swarm?"

The timeline was aggressive. The domain was specialized. The stakes were visible. And the design problem was genuinely hard: how do you give one person meaningful command over a fleet of autonomous agents, each making its own decisions, on a four-inch screen?

// asv.hardware · operational context · silent.swarm

// asv.hardware · operational context · silent.swarm

Starting Point: Games, Not Dashboards

I started where I often do in high-cognitive-load domains: gaming.

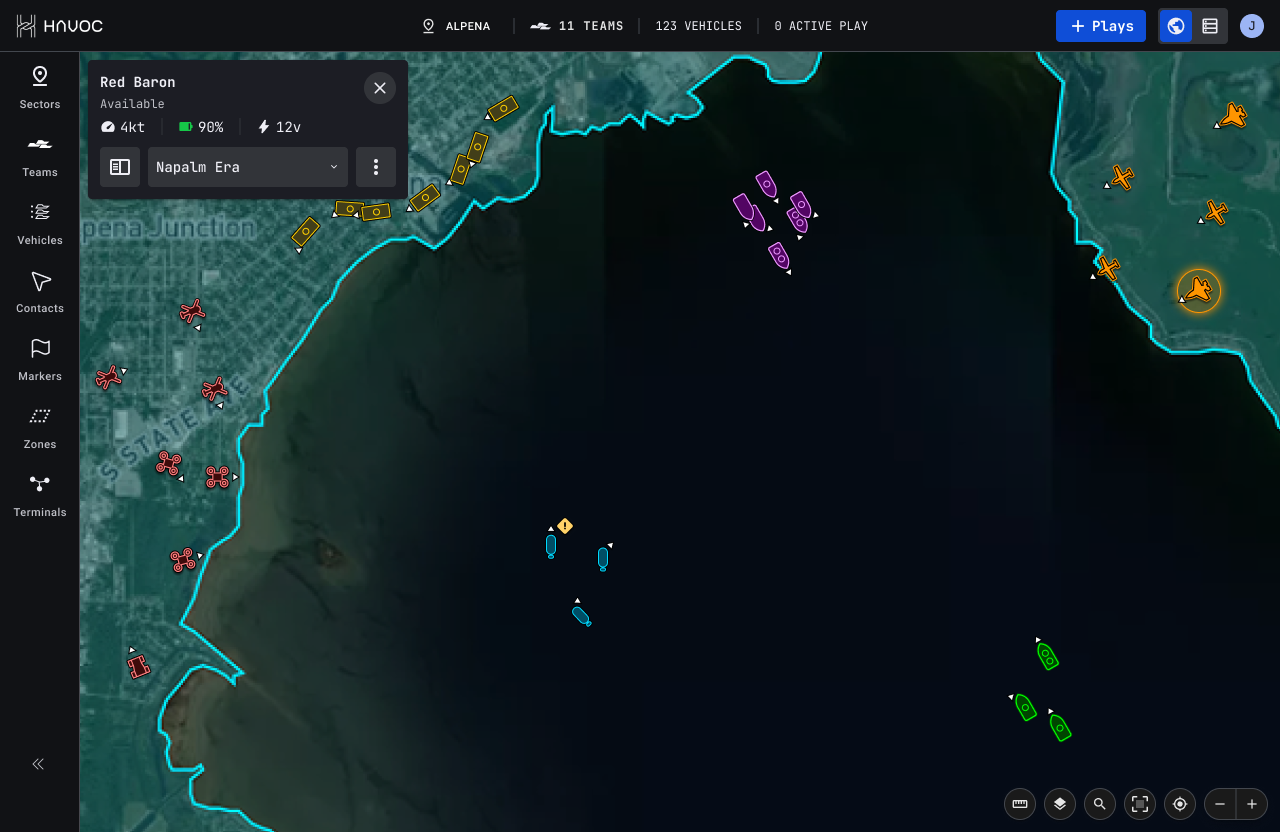

Real-time strategy games have spent decades solving the exact problem we were facing: how do you give a single person command over dozens or hundreds of agents, under time pressure, with incomplete information? RTS titles like Total War and Stellaris had developed rich paradigms for fleet control, unit grouping, and battlefield situational awareness. I studied them systematically for patterns worth translating.

The real breakthrough came from a mobile title: Star Trek: Fleet Command. It showed me how to let an operator move rapidly between individual vessels and fleet-level overview, maintaining broad situational awareness while enabling contextual focus. On a small screen, that's the design problem in miniature.

We also studied the competitive landscape, including Anduril's Lattice, to understand what paradigms were emerging as standard in defense-tech C2 software. The category was young enough that there was still room to define what "best-in-class" looked like.

// interface.overview · fleet-level command · translating rts paradigms to operational reality

// interface.overview · fleet-level command · translating rts paradigms to operational reality

Design Strategy: Rethinking the Mental Model

We weren't building a control panel. We were shaping how operators think about collaborative autonomy. That required deliberate reframing across three dimensions.

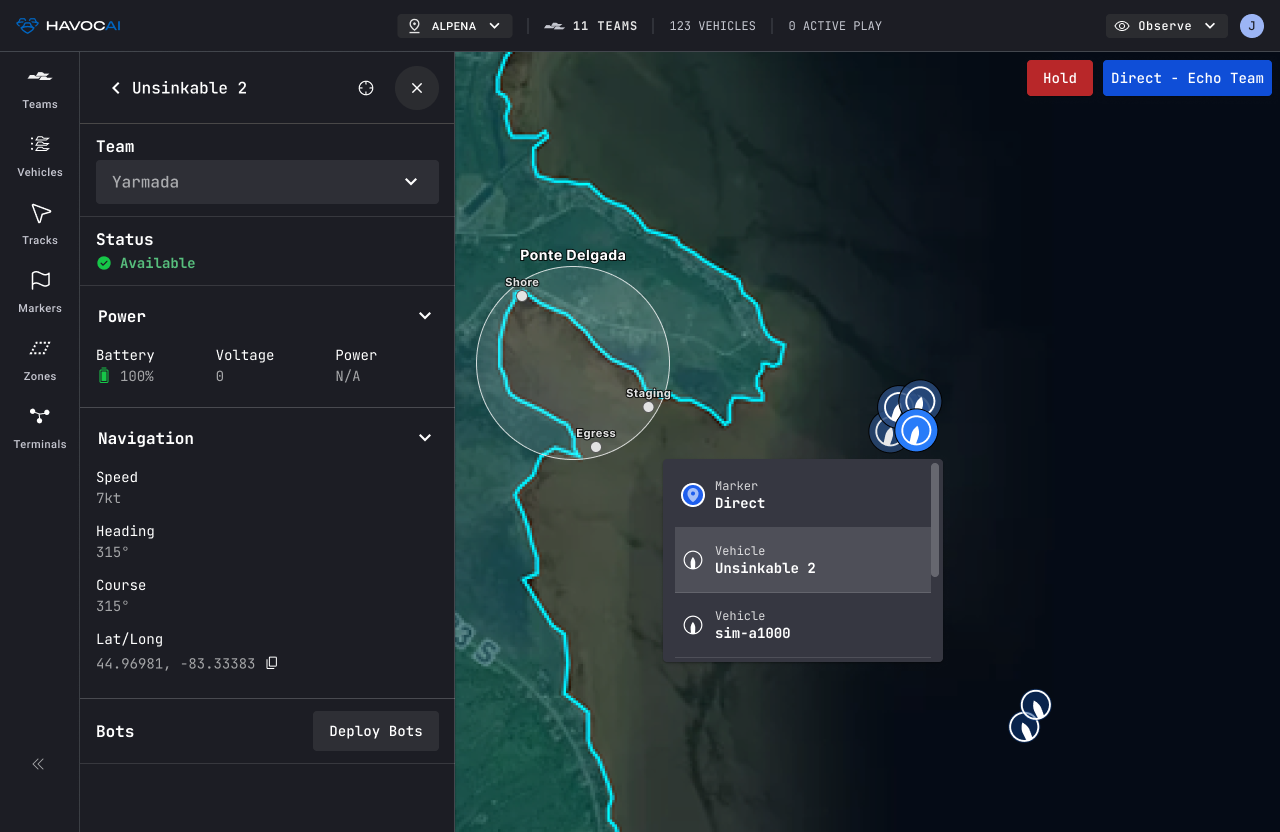

Teams, not vehicles. The first and most important conceptual shift: we moved the primary object of interaction from individual vessels to teams. Individual assignment remained possible but secondary. In a scenario with dozens or hundreds of agents, managing at the vehicle level is a cognitive impossibility. The interface had to invite group-level thinking from the first interaction.

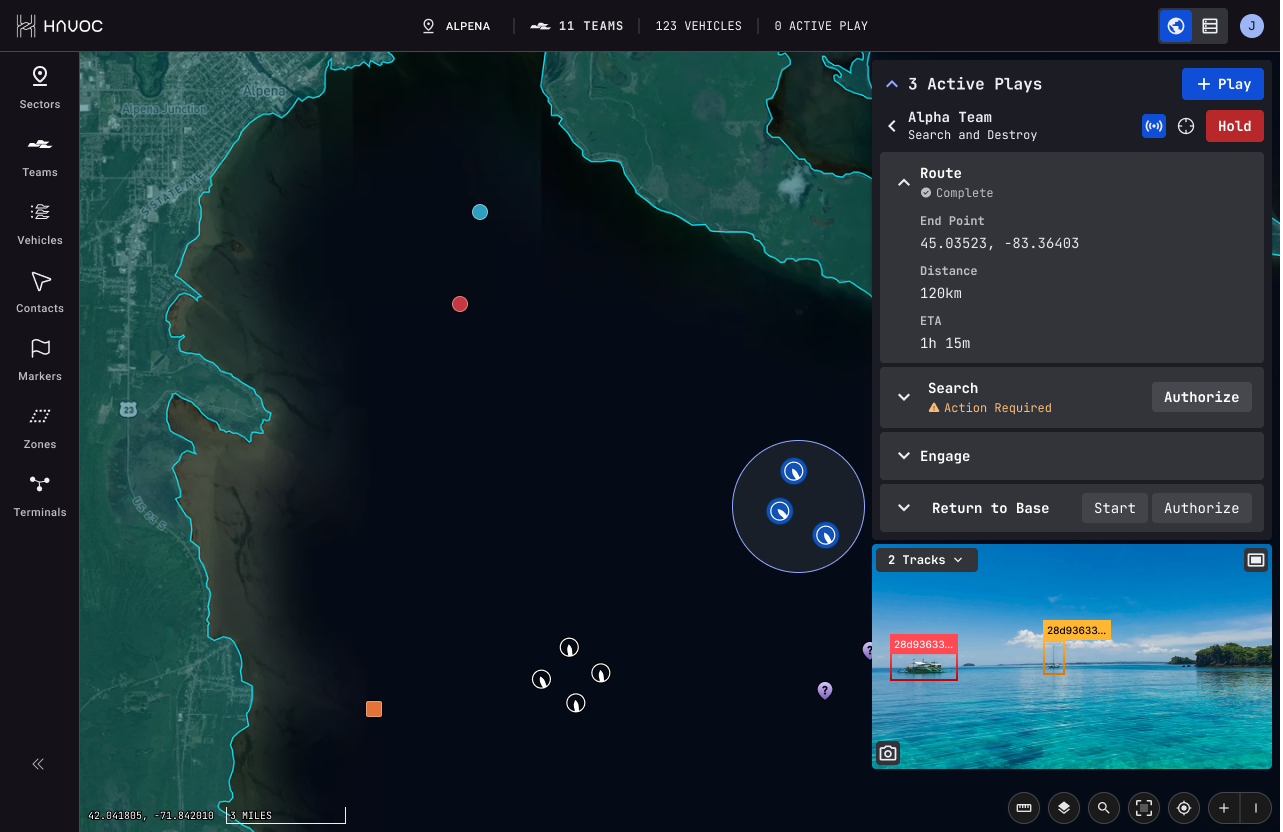

Agent-centric context. We challenged the assumption that operators always need the satellite view. What if, instead, the operator could see what the autonomy sees? We prototyped UI views built around each vessel's own perception data: shoreline recognition, local hazard mapping, affiliation signals. Backend limitations meant we couldn't surface full sensor fusion, but even partial transparency into the autonomy's decision-making was meaningful. Trust in autonomous systems doesn't come from watching dots on a map. It comes from legibility, from understanding why the system did what it did.

iPad Mini as the floor. When we asked stakeholders what devices field operators would use, the answer was genuinely unclear. Field conditions vary. No single form factor had been standardized. So we set the constraint ourselves: design for the iPad Mini. If it works at that scale, it can expand. But it will force clarity, touch-optimization, and restraint. Every interaction had to earn its space.

Process Design: Leading Without Authority

As the Silent Swarm demo approached, feature requests started arriving from every direction: field testers, pilot users, internal teams, each with something urgent. None of them were bad ideas. But without a prioritization structure, they were going to splinter the product into a collection of one-off tools, which is the fate of most defense software.

The catch: as an external agency, we didn't have the authority to simply say no. We had to lead through influence and process design.

I proposed and helped stand up a structured intake system: a dedicated Jira board for feature requests and triage, a Slack channel for informal capture and async clarification, and a lightweight process to group and contextualize requests before they touched the roadmap. Simple scaffolding, but it gave us a defensible frame for decisions and gave the client team a language for talking about priority that wasn't just whoever shouted loudest.

Product Drift: Re-Focusing on the Real User

The most subtle and dangerous challenge emerged mid-project. We were building the product for the wrong user.

As autonomy testing ramped up, UX decisions began drifting toward the internal autonomy integration team: engineers and test leads who needed diagnostics, tuning tools, override mechanisms. Important needs. But the product was supposed to be operable by a naval field operator with minimal training, in high-stress conditions, in minutes. Those are very different design targets. And we'd been slowly losing sight of the second one.

I stepped back, took stock of the drift, and authored a Product Alignment Document: a piece of strategic writing that translated leadership's spoken vision into explicit product pillars and re-established two clearly defined user types. The Field Operator (primary): in the field, minimal training, under stress. The Autonomy Integrator (secondary, internal): diagnostics, tuning, system visibility. It gave the team a north star and gave stakeholders a shared vocabulary for evaluating decisions.

Writing the document was, in some ways, the most important design work I did on this project.

// direct.play · list.item.selected · operator-first information hierarchy

// direct.play · list.item.selected · operator-first information hierarchy

Outcome: Silent Swarm and Beyond

The interface debuted at Silent Swarm two months from kickoff. The fleet performed flawlessly. Operators used the UI without hand-holding. The feedback we heard most often:

"So easy to use."

The demo directly supported a $15M funding round.

We were re-engaged to clean up early-stage features, build a scalable design system, and extend the interface as the autonomy platform grew more sophisticated. That phase supported a further $85M raise. The company has continued to scale as a significant player in the defense autonomy space.

// play.details · task.expanded · sensor.fusion · the interface that debuted at silent.swarm

// play.details · task.expanded · sensor.fusion · the interface that debuted at silent.swarm

What I'm Proud Of

The game-research angle makes for a good story, and it was genuinely useful. But the work I'm most proud of was less visible: the product alignment document that called out the drift before it became a crisis; the intake process that gave an agency team real influence without formal authority; the decision to design for the iPad Mini and accept the constraints that came with it.

This project required design leadership in the full sense. Not just craft, but strategic framing, stakeholder navigation, and the judgment to zoom out when every immediate pressure was to zoom in. The most consequential design decision I made wasn't about layout or interaction. It was about whose needs we were actually designing for.

Specific interface details, client name, and operational context available under NDA.

>_ discuss this project →